Using ElasticSearch Machine Learning

to Monitor Mails

Imagine this scenario: Your Marketing automation program is implemented in your favourite MA platform, and everything seems to run fine. The mail is complex and has segmented content so it is basically a one-to-one email. But you can't help but wonder if all recipients are getting the correct content? Are all elements working? To see what is going on, you add your own mail as BCC and check it yourself. One million emails later, and you're getting tired of constantly checking emails...

A solution to end this manual process, is to use ElasticSearch. This is a platform that can store, search and analyse large amounts of data quickly. It gets streams of data in real time and can easily be used in connection with other services. ElasticSearch also provides a set of tools that utilises the stored data, including dashboards and alerts. It can easily store millions of mails, make a dashboard that changes in real time, and at the same time with running a machine learning model in the background. ElasticSearch hides most of the complexity so that it can also be used by beginners, and as you become more skilled you can customize the monitoring and analyse more complex tasks.

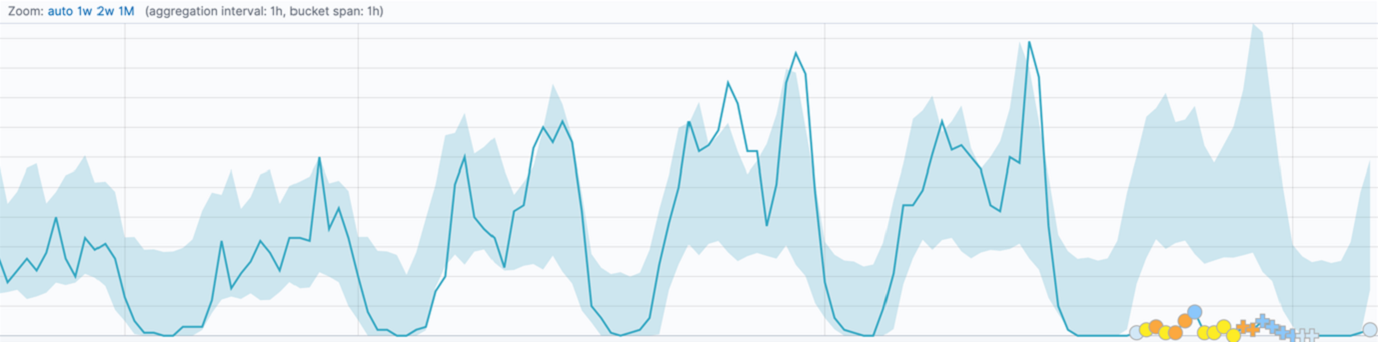

To solve the problem of ensuring that your dynamic emails contain all the expected content, the first idea that comes to mind is to create a threshold alert which will check on the amount of content in the emails. However, a threshold alert will not spot the natural changes in the send-out and will still flood my inbox with irrelevant error mails. Instead, machine learning can be used to analyse the patterns of the data, noticing weekends and other seasonal changes. ElasticSearch provides a tool that utilises unsupervised time series analysis (creating machine learning models). A part of this tool is also the ability to create alerts based on the detected anomalies, thereby, catching all relevant errors and skipping unnecessary error mails.

How can it be used?

The time series analysis is a good tool to predict patterns of data that does not follow the same pattern every day.

The number of mails sent out each day will not be constant and therefore, the machine learning model needs to be able to recognise events like weekends and Christmas breaks.

ElasticSearch machine learning finds a pattern in the send-out of mails, and then if an expected email fails to send, an alert will be sent.

This creates the opportunity to catch errors immediately, but also get an overview of the patterns in the data and the seasonal changes.

Setting up mail monitoring

In our case, we would like to monitor if the number of mails sent out is too low compared to what was expected by the machine learning model.

The first step is to establish the data source. In ElasticSearch we can use streams of data called indexes, for this case an index called "monitor" was created. Every time an email is sent out all information is stored in our "monitor" index. We have different types of mails we would like to monitor, and therefore, we decided to create an anomaly detection job for each type.

The next step is to create the job, which is the task that continuously analyses the data. Here we start by choosing the index and which job type we will create.

Several cases can be done using the single metric model, however, our case requires that we use data from one email type within each job in order to monitor each email separately.

Using the advanced job, we can create a query that selects the specific data needed for the job.

The next step is to create the job, which is the task that continuously analyses the data. Here we start by choosing the index and which job type we will create.

Several cases can be done using the single metric model, however, our case requires that we use data from one email type within each job in order to monitor each email separately.

Using the advanced job, we can create a query that selects the specific data needed for the job.

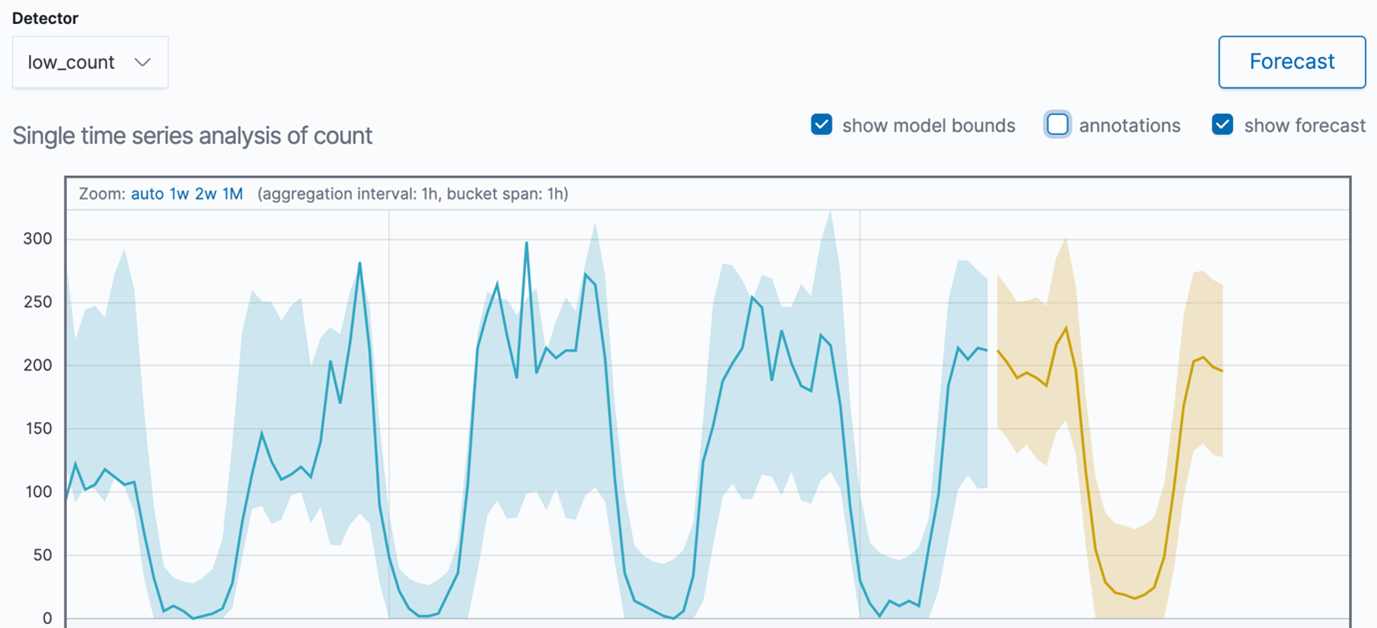

Afterwards, we configure the data feed, this can either be left alone or in our case be used to define the mail type we want to focus on. Then we need to create a detector, and we only want to get notified when the count is too low (not too high) and therefore we set it as "low_count". To limit resources and help the model make more precise estimations, we set the bucket span to one hour (1h) because we have data from several years and with a lot coming in each day. Next, we give the job a name, and enable both model plot (pattern predictions) and model change annotations (adjust for seasonal changes etc.). Then the model is created and within minutes we can see the patterns in the previous data points.

Setting up the alert system

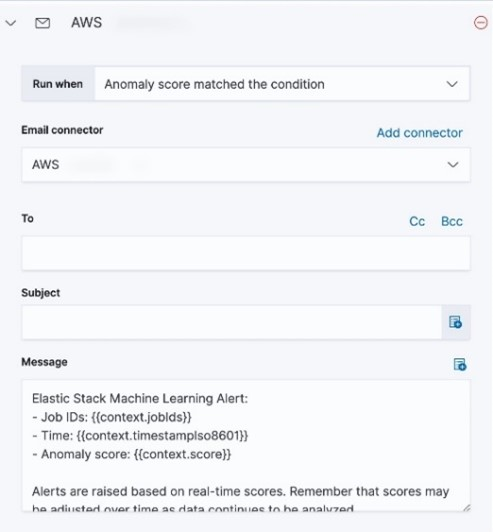

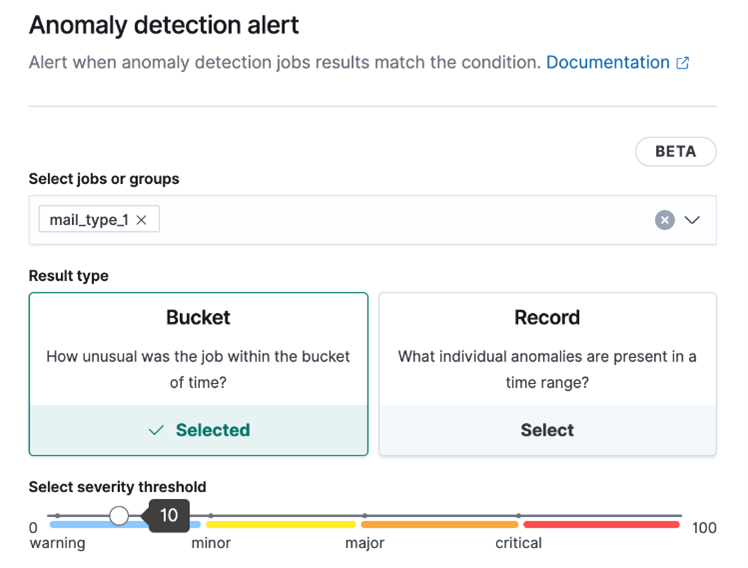

After the models are created and we are happy with the patterns they follow, we then create an anomaly detection alert for each model. These alerts need an action to send a notification, so we first need to create an email connector dedicated to the ML alerts.

We created bucket alerts on each job and adjusted the severity threshold to be low because the data is quite stable. Some of the mail types are a bit more sensitive and do not have a clear pattern yet, for these we increased the severity threshold to 75.

Tips for success

The jobs can be customised in several ways, especially when using the query tool to focus on specific data points. The "Dev tools/Console" can be used to test the queries before making the jobs.

The model will perform better the more data it has, especially for once-a-year occurrences such as the Christmas break. Therefore, we have experienced that it needs at least one or two years to create an accurate pattern of the data.

Within ElasticSearch there are several tools to visualise the finding. The timeline overview of the individual jobs can be added to dashboards and be part of a report, and in the Single Metric Viewer there is a function to visually forecast the pattern of the data within a specific time range.

In conclusion, ElasticSearch has created a great tool that can help you monitor your data and find patterns that only a machine can find. Utilising this can help spot errors in the mail send-outs and correct them before it is too late.

If you want to read more all the documentation can be found here, which includes guides and videos to get started with machine learning in ElasticSearch.